ArXiv Strikes Back Against AI-Generated Academic Papers with One-Year Bans

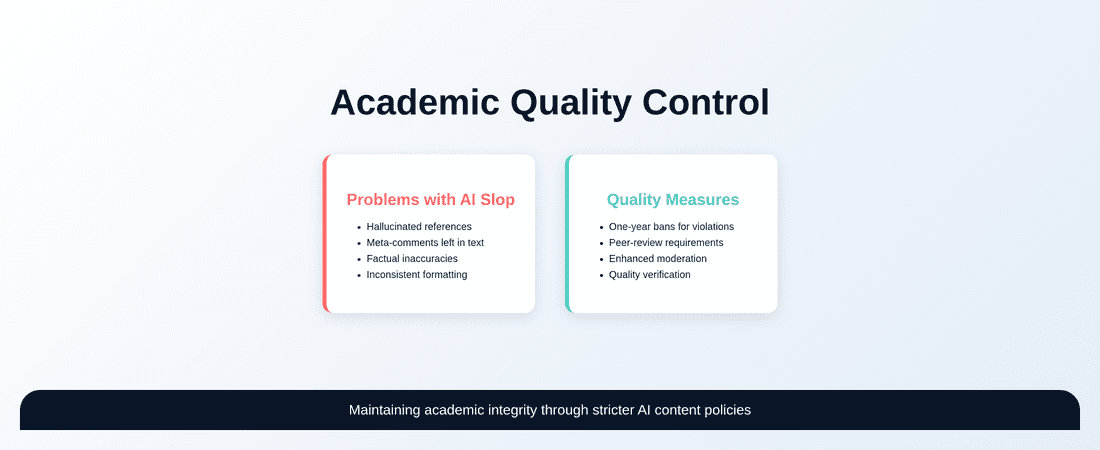

ArXiv implements one-year bans for researchers submitting AI-generated papers without proper human oversight, targeting ‘AI slop’ that includes hallucinated references and unedited LLM content. The policy requires future submissions from banned authors to be peer-reviewed before acceptance, marking a significant step in maintaining academic publishing standards in the AI era.

Academic publishing is facing a reckoning as artificial intelligence infiltrates scholarly research. ArXiv, the influential preprint repository that hosts millions of academic papers, has announced stringent new measures to combat what insiders call “AI slop” — poorly generated content that researchers submit without proper oversight.

The New ArXiv Policy: Zero Tolerance for Unchecked AI Content

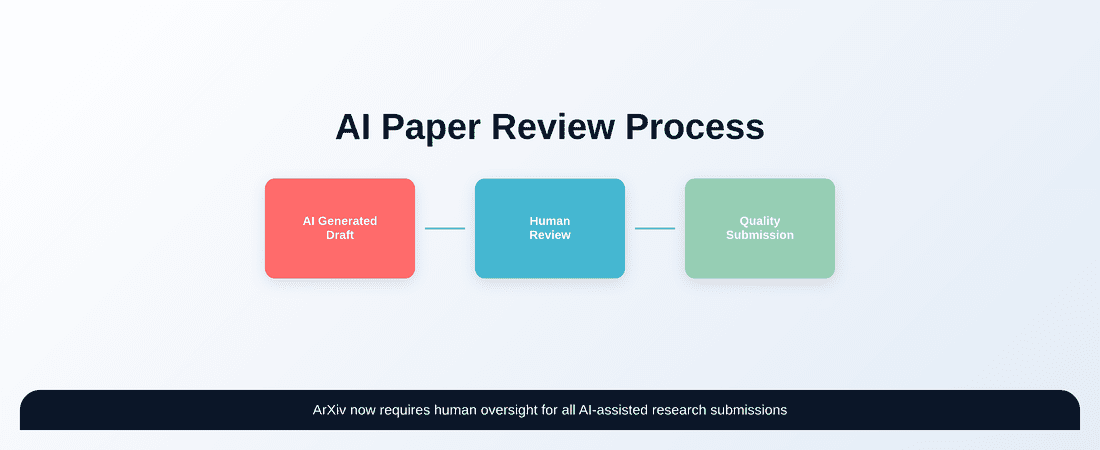

According to reporting by The Verge at https://www.theverge.com/science/931766/arxiv-ai-slop-ban-researchers, ArXiv is implementing a one-year ban policy for researchers who submit papers containing “incontrovertible evidence that the authors did not check the results of LLM generation.” This includes papers with hallucinated references or “meta-comments” left by large language models that authors failed to remove or verify.

Thomas Dietterich, ArXiv’s section chair for computer science, outlined the policy changes on social media, emphasizing that future submissions from banned authors must be accepted at “a reputable peer-reviewed venue” before being allowed back on the platform.

The move represents a significant escalation in the ongoing battle against low-quality AI-generated content flooding academic repositories. For researchers in India’s rapidly growing academic sector, these changes signal a need for more rigorous oversight of AI-assisted research tools.

What Constitutes AI Slop in Academic Papers?

The term “AI slop” refers to content generated by artificial intelligence systems that authors submit without proper verification or editing. Common indicators include:

- Hallucinated references: Citations to papers that don’t exist, often created by AI models that confidently generate fake scholarly sources

- Meta-comments: Instructional text like “Please write a conclusion” or “Add more references here” that authors forgot to remove from AI-generated drafts

- Inconsistent formatting: Abrupt changes in writing style or citation formats that suggest multiple AI tools were used

- Factual inaccuracies: Claims or data points that sound authoritative but have no basis in reality

The proliferation of AI-generated content without human oversight threatens the fundamental integrity of academic discourse and peer review processes.

For Indian researchers, who contribute significantly to global academic output, understanding these quality markers becomes crucial for maintaining credibility in international academic circles.

The Broader Impact on Academic Publishing

ArXiv’s decision reflects growing concerns across the academic publishing ecosystem. While AI tools can legitimately assist researchers in drafting, summarizing, and organizing their work, the complete abdication of human oversight creates several problems:

Quality Degradation

When researchers submit unvetted AI content, the overall quality of academic discourse suffers. Peer reviewers waste time identifying obviously flawed papers, and genuine research gets buried under volumes of low-quality submissions.

Trust Erosion

The academic community relies on trust and shared standards. When papers contain fabricated references or nonsensical claims, it undermines confidence in the entire system of scholarly communication.

Resource Strain

Platforms like ArXiv operate on limited resources. Processing and moderating AI-generated content diverts attention from supporting legitimate research dissemination.

Implications for Indian Academic Community

India’s research output has grown exponentially in recent years, with institutions increasingly embracing digital tools and AI assistance. The ArXiv policy changes carry particular significance for Indian researchers:

Institutional Reputation

Indian universities and research institutions have worked hard to build international recognition. Faculty members who receive ArXiv bans could damage their institutions’ reputations and collaboration prospects.

Funding Considerations

Many research grants, both domestic and international, consider publication records in funding decisions. A ban from ArXiv could affect researchers’ ability to demonstrate productivity and impact.

Career Implications

For early-career researchers, academic misconduct allegations can have long-lasting consequences on job prospects and professional advancement.

Best Practices for AI-Assisted Academic Writing

Rather than avoiding AI tools entirely, researchers can adopt responsible practices that leverage these technologies while maintaining academic integrity:

Transparent Usage

Clearly disclose when and how AI tools were used in the research process. Many journals now require such disclosures as standard practice.

Rigorous Verification

Fact-check all AI-generated content, especially references, data points, and technical claims. Use AI as a starting point, not a final product.

Human Oversight

Maintain meaningful human involvement in all stages of writing and analysis. AI should augment human expertise, not replace it.

Quality Control Processes

Implement systematic review procedures to catch AI-generated errors before submission. This includes checking references, verifying claims, and ensuring consistent voice throughout the paper.

The Future of Academic Publishing in the AI Era

ArXiv’s policy represents just one response to the challenges posed by AI in academic publishing. As these technologies become more sophisticated, the academic community will need to evolve its standards and practices accordingly.

The emphasis should shift toward teaching researchers how to use AI tools responsibly rather than banning their use entirely. This includes developing better detection methods, creating clearer guidelines for acceptable use, and fostering a culture of transparency around AI assistance.

For the Indian academic community, this moment presents an opportunity to lead by example in responsible AI adoption. By establishing high standards for AI-assisted research and maintaining rigorous quality control, Indian institutions can continue building their international reputation while embracing technological innovation.

The ArXiv policy sends a clear message: the academic community values quality and integrity over quantity and convenience. As AI tools become more prevalent in research workflows, maintaining these standards will be essential for preserving the credibility and value of scholarly communication worldwide.