Stop Prompting, Start Delegating: The Rise of Agentic AI

Here’s a question that’s been rattling around my head for the last few months: What happens when AI stops waiting for your instructions and starts figuring things out on its own? Welcome to the world of agentic AI.

Because that’s exactly what’s happening right now. And most people haven’t noticed.

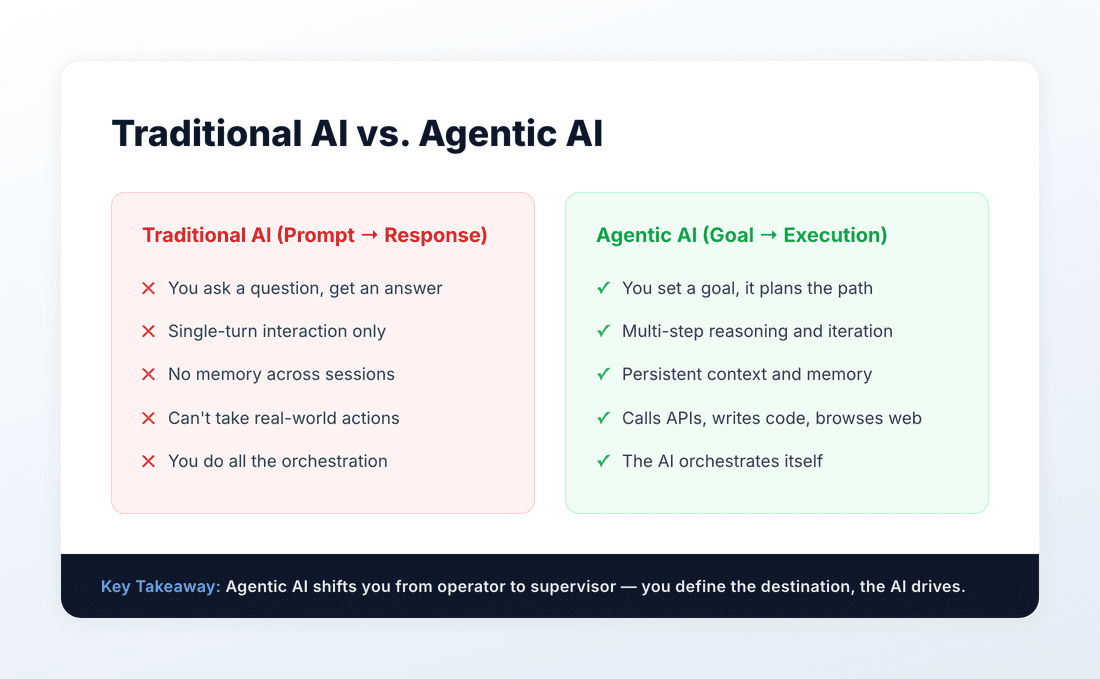

We’ve spent the last two years getting comfortable with AI as a really smart autocomplete — you type a prompt, it spits out a response, and you go from there. ChatGPT, Claude, Gemini — they’re all brilliant at this. But they share one fundamental limitation: they only do what you tell them to do, one turn at a time.

Agentic AI flips that model on its head. Instead of giving AI a single instruction and getting a single response, you give it a goal — and it figures out the steps, executes them, course-corrects when things go wrong, and delivers the result. No hand-holding required.

This isn’t science fiction. It’s happening right now, in tools you can use today. And if you’re not paying attention, you’re about to be blindsided by the biggest shift in how we work since the internet went mainstream.

Let me break it down.

What Exactly Is Agentic AI? (And Why Should You Care?)

Let’s get the definition out of the way without the Silicon Valley jargon.

Agentic AI refers to AI systems that can autonomously plan, reason, use tools, and take multi-step actions to achieve a goal. Think of it as the difference between a calculator and an accountant. A calculator does exactly what you punch in. An accountant understands your financial goals, gathers the right data, runs the numbers, spots problems, and comes back with recommendations.

Traditional AI is the calculator. Agentic AI is the accountant.

Here’s what makes an AI system “agentic”:

Autonomy: It can decide what to do next without you telling it every step.

Tool use: It can call APIs, search the web, execute code, read files, and interact with external systems.

Planning: It breaks complex goals into smaller tasks and tackles them in order.

Self-correction: When something fails, it adjusts its approach instead of just throwing an error.

Memory: It remembers context across interactions, building on previous work.

So what does this mean for you? It means the role of the human is shifting from “operator” to “supervisor.” You’re no longer typing out every instruction — you’re setting direction and reviewing output. That’s a fundamentally different skill set, and the people who develop it early will have an enormous advantage.

The Anatomy of Agentic AI: How It Actually Works

If you want to understand agentic AI — not just use it, but actually understand it — you need to know what’s happening under the hood. Don’t worry, I’ll keep this practical.

Every agentic AI system, whether it’s Claude Code writing software or an AutoGPT clone trying to book your flights, follows the same basic architecture. I call it the 4-Layer Agentic Stack.

Layer 1: The Foundation Model (The Brain)

This is the large language model at the core — GPT-4, Claude, Gemini, Llama, whatever. It’s the reasoning engine that processes your goal, understands context, and decides what to do next. The quality of the foundation model directly determines how smart your agent is. This is why you’ll see agents built on Claude or GPT-4 dramatically outperform those built on smaller models — the reasoning gap is still massive.

Layer 2: The Tool Layer (The Hands)

An LLM by itself can only generate text. The tool layer is what gives it the ability to do things — search the web, execute Python code, read and write files, call APIs, query databases, send emails. Without tools, you have a chatbot. With tools, you have an agent. The breadth and quality of available tools defines what the agent can actually accomplish.

Layer 3: The Memory Layer (The Notebook)

Agents need to remember things — what you asked for, what they’ve already tried, what worked and what didn’t. This layer typically includes short-term memory (the current conversation context) and long-term memory (stored in a vector database or file system). Memory is what prevents the agent from going in circles and lets it build on previous work across sessions.

Layer 4: The Orchestration Layer (The Manager)

This is the secret sauce. The orchestration layer is the planning loop — the logic that determines what step to take next, evaluates whether the previous step succeeded, handles errors gracefully, and decides when the overall goal has been achieved. Frameworks like LangGraph, CrewAI, and Anthropic’s Agent SDK primarily operate at this layer, giving you pre-built patterns for common agent architectures.

Understanding this stack matters because it helps you evaluate any agentic tool or framework. When someone pitches you an “AI agent,” you can immediately ask: What model is it using? What tools does it have access to? How does it handle memory? What’s the orchestration pattern? These four questions will tell you 90% of what you need to know.

Real-World Agentic AI You Can Use Right Now

Let’s get out of theory and into practice. Here are agentic AI systems that are already changing how people work — not in some research lab, but in production, today.

Coding Agents

This is where agentic AI has made the most visible impact. Tools like Claude Code, Cursor, and GitHub Copilot Agent don’t just autocomplete your code — they understand your entire codebase, plan implementations across multiple files, write tests, debug failures, and even run your test suite to verify their work. I’ve personally watched Claude Code take a feature request, read the existing code, plan the implementation, write it across six files, run the tests, fix two failures, and submit the result — all from a single prompt. That’s not autocomplete. That’s an autonomous developer.

Research Agents

Perplexity Pro and ChatGPT’s Deep Research mode are early examples of research agents. You give them a complex question, and instead of generating a single response, they plan a research strategy, search multiple sources, synthesize findings, identify contradictions, and produce a comprehensive report with citations. What used to take hours of manual research now takes minutes.

Workflow Automation Agents

Platforms like n8n, Make, and Zapier AI are evolving from simple “if-this-then-that” automation into full agentic workflow engines. You can now describe a workflow in natural language — “When a new support ticket comes in, categorize it, draft a response, check our knowledge base for relevant articles, and escalate to a human if sentiment is negative” — and the system builds and runs the entire pipeline.

Computer-Use Agents

This is the frontier. Agents that can see your screen and control your mouse and keyboard to interact with any application, just like a human would. Anthropic’s Computer Use capability, OpenAI’s Operator, and Google’s Project Mariner are all pushing in this direction. It’s still early and imperfect, but the trajectory is clear — AI agents that can operate any software you can.

The “So What?” — Why This Changes Everything for Knowledge Workers

Here’s where I get opinionated, because I think most coverage of agentic AI misses the point entirely.

The real story isn’t that AI can do more things autonomously. The real story is that the skills that make you valuable are about to change dramatically.

For the last 30 years, knowledge work has rewarded execution speed. The fastest coder, the fastest analyst, the fastest writer — they won. Agentic AI commoditizes execution. When an agent can write code, produce reports, and draft documents at machine speed, being fast at those things is no longer your competitive advantage.

What is your competitive advantage? Three things:

1. Problem framing. The ability to take a vague, messy, real-world situation and turn it into a clear goal that an agent can execute on. This is the new “10x skill.” The person who can look at a business problem and define the right objective will get 10x the output of someone who can’t — because the agent does the execution.

2. Quality judgment. Agents will produce output fast, but they won’t always produce good output. The ability to evaluate, critique, and direct the agent’s work — to know when the code is subtly wrong, when the analysis misses a key variable, when the writing lacks nuance — is irreplaceable. You need deep domain expertise to be a good supervisor.

3. System design. Understanding how to compose multiple agents, tools, and workflows into reliable systems that produce consistent results. This is the new “full-stack” skill — not full-stack developer, but full-stack AI operator.

If you’re a knowledge worker reading this, here’s my honest advice: stop optimizing for speed and start optimizing for judgment. The people who thrive in an agentic world won’t be the ones who type the fastest — they’ll be the ones who think the clearest.

How to Get Started with Agentic AI (Without Getting Overwhelmed)

I know the above might feel intimidating. “Great, I need to learn yet another thing.” I hear you. Here’s the good news: you don’t need to build agents from scratch to benefit from them. Here’s a practical roadmap.

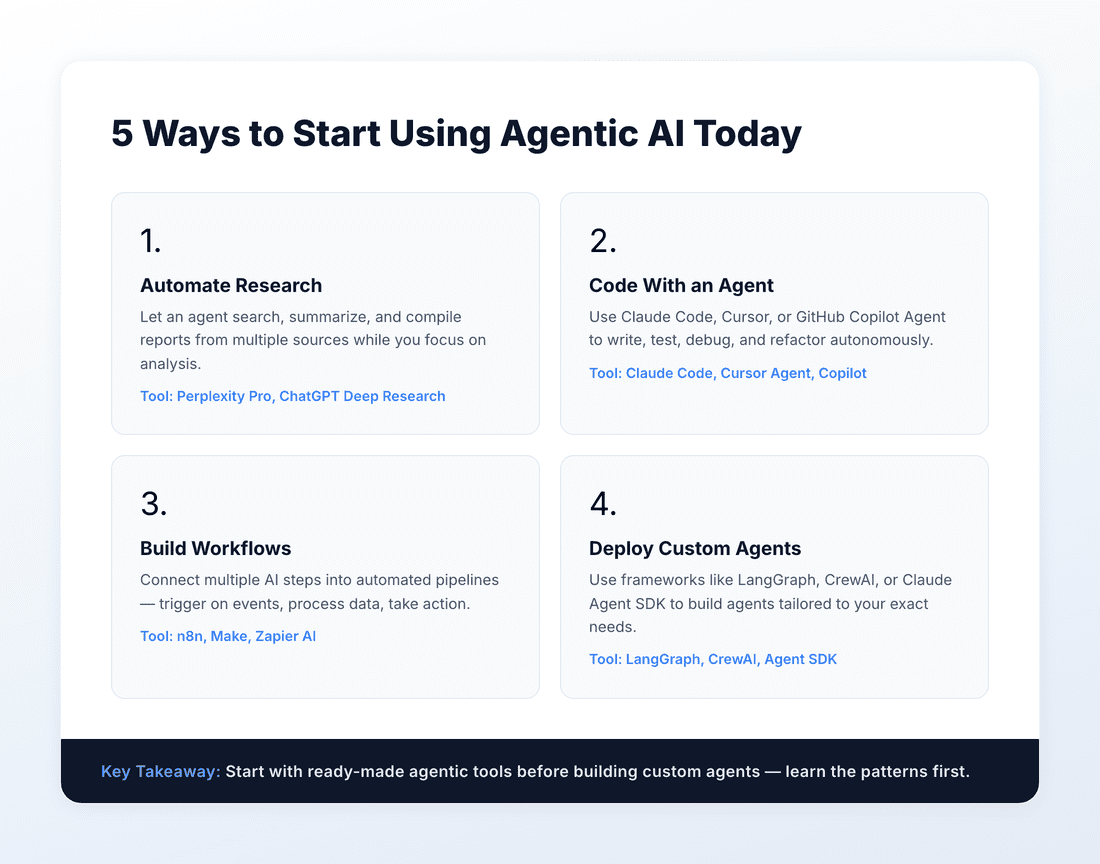

Phase 1: Use Existing Agentic Tools (This Week)

Start with tools that are already agentic but don’t require any setup. Use ChatGPT’s Deep Research for your next research task. Try Claude Code for a coding project. Use Perplexity Pro instead of Google for complex questions. You’ll immediately feel the difference between “I asked AI a question” and “I gave AI a job.” If you’re not sure which tools to try first, check out our guide on getting started with AI in 2026.

Phase 2: Build Simple Workflows (This Month)

Pick one repetitive task in your work and automate it with an agentic workflow. n8n (free, open-source) is a great place to start. Connect your email, a Google Sheet, and an LLM call — suddenly you have an agent that categorizes incoming emails, extracts key information, and logs it to a spreadsheet without you lifting a finger.

Phase 3: Explore Agent Frameworks (This Quarter)

If you’re technical, dive into a framework. LangGraph is great if you want fine-grained control. CrewAI is excellent for multi-agent systems where different agents handle different roles. Anthropic’s Agent SDK is clean and well-documented if you’re already in the Claude ecosystem. Build something small but real — a personal research agent, a code review assistant, a meeting prep tool.

The Risks of Agentic AI Nobody’s Talking About

I wouldn’t be doing my job if I didn’t address the elephant in the room. Agentic AI is powerful, but it comes with real risks that the hype cycle conveniently ignores.

Reliability. Agents fail. They misunderstand goals, get stuck in loops, make confident-sounding mistakes, and sometimes take actions you didn’t intend. We’re in the “early automobile” phase — incredibly useful, but you absolutely need to keep your hands on the wheel. Never give an agent unsupervised access to anything you can’t easily undo.

Security. An agent with access to your APIs, databases, and email is an agent that can cause real damage if it’s compromised, misconfigured, or just confused. The attack surface expands dramatically when AI can take actions, not just generate text. Principle of least privilege isn’t optional — it’s critical.

Cost. Agentic workflows consume significantly more tokens than single-turn interactions. An agent that plans, executes, evaluates, and retries might make dozens of LLM calls to complete one task. Monitor your usage and set hard limits, especially when experimenting.

Overreliance. The biggest risk of all. When AI handles execution so well, it’s tempting to stop understanding what it’s doing. Don’t. The moment you can’t evaluate the agent’s output is the moment you’ve lost control. Stay technically sharp even as you delegate more.

The Bottom Line on Agentic AI

Agentic AI isn’t coming. It’s here. The question isn’t whether it will change how you work — it’s whether you’ll be the person directing the agents or the person wondering why everyone else suddenly got 10x more productive.

The shift from prompting to delegating is the most important mental model change in the AI era. Master it, and you’ll multiply your output without multiplying your hours. Ignore it, and you’ll find yourself doing manually what others are doing with a single goal statement and an autonomous agent.

My advice? Start this week. Pick one agentic tool. Give it a real task — not a toy example. See what happens. You’ll be amazed at what’s possible, humbled by what still breaks, and motivated to go deeper.

The future belongs to the delegators. Let’s make sure you’re one of them.